Step 7: Enter other configurations for your cluster. Step 6: Inspect the size of your data or estimate the size.

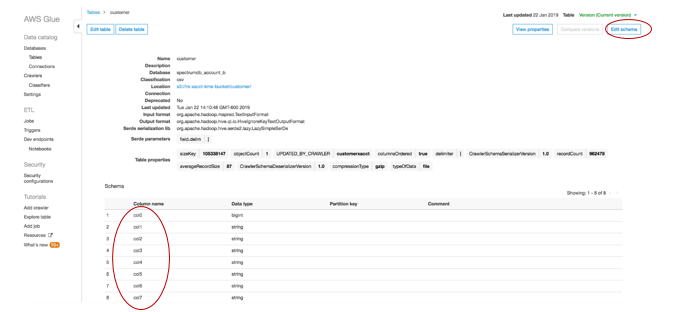

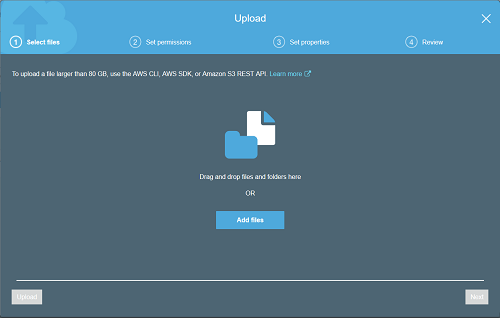

Preview 2022 to allow you to test the new features. Next select from the drop-down menu a Preview track e.g. Step 5: Create a unique name to identify your cluster in lowercase letters. Step 4: Click Create preview cluster in the banner. In this preview, I will use the AWS region US-East (Ohio) (us-east-2). Step 3: Create a cluster in Preview by selecting Provision clusters dashboard Step 2: Navigate to Amazon Redshift by typing 'Redshift' in the search bar. Step 1: Sign into the AWS Management Console with your AWS IAM Admin User account details. Tutorial 1: Getting started with auto-copy files from Amazon S3(Preview) into Amazon Redshiftįor this tutorial we will follow the steps outlined in the Amazon Redshift Database Developer Guide Using AWS Glue to create a crawler to inspect the schema in one file from the data catalogue: There are five datasets ranging from 2015 to 2019 The World Happiness Report dataset is available to download from Kaggle here. You may follow this blog to learn how to create a crawler. Use AWS Glue to create a crawler to inspect the meta data in the data catalogue table. You may view this blog to create your first S3 bucket. You may view the blog to create an account.Ĭreate an Amazon S3 bucket. Login into your AWS account as an IAM Admin user. This tutorial includes data from Kaggle at this link In this high-level architecture, new files are ingested into a single Amazon S3 bucket into separate folders and a copy job inserts the data into the corresponding Table A and Table B.ĭownload an interesting open source dataset and save it in your directory. You can save time for your team by avoiding manual uploads of new data from an S3 bucket into Amazon Redshift with COPY statements.ĬOPY jobs will be able to detect data that was previously loaded in the data ingestion process.ĬOPY jobs can prevent duplicated data when an automated job is not required.ĬOPY jobs can be manually created to reuse copy statements You may store a COPY command in a COPY job in Amazon Redshift which will detect new files stored in Amazon S3 and load the data into your table. New files can be ingested in the formats csv, json, parquet or avro. What is Amazon Redshift Auto-Copy from Amazon S3 ?Īmazon Redshift auto-copy from Amazon S3 simplifies data ingestion from Amazon S3.Īuto-copy from Amazon S3 is a simple, low code data ingestion that automatically loads new files that are detected in your S3 bucket into Amazon Redshift. In this lesson you will learn how to ingest data with auto-copy from Amazon S3 into Amazon Redshift.You may be a data scientist, business analyst or data analyst familiar with loading data from Amazon S3 into Amazon Redshift using the COPY command, at AWS re:invent 2022 to help AWS customers move towards a zero-ETL future without the need for a data engineer to build an ETL pipeline, data movements can be simplified with auto-copy from Amazon S3 into Amazon Redshift.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed